Compare Boulder Opal performance against competitive products

Evaluating Boulder Opal performance across multiple benchmarks

Boulder Opal shows significant time savings on key virtual engineering tasks when compared to C3 and QuTiP, especially as the compute load of the task increases. Additionally, Boulder Opal cloud acceleration means users can apply more compute resources to further reduce development times.

Introduction

Benchmarks are a common industry practice to determine the performance of quantum computing systems. However, a key step to designing high performance quantum computing systems is quantum computing simulation. These virtual tools allow physicists, researchers, and engineers to more quickly iterate experiments before making and running them on actual hardware. The speed and accuracy of these tools has a direct impact on the resulting performance of the quantum computing system.

Boulder Opal accelerates the design and implementation of the control systems that quantum computers depend on. By connecting the simulation, creation, optimization, and automation of quantum controls across a common solution, quantum computing teams can be confident in their ability to get peak performance out of their quantum hardware, without spending weeks or even months on calculation intensive tasks.

At Q-CTRL, benchmarking is performed continuously to test the performance of the latest quantum control technologies. In this topic, we document some of the latest benchmarking results achieved across different control software tools for quantum computing. These benchmarking results can help to set expectations for what sorts of improvements you will see with Boulder Opal, but it is still highly encouraged to try it out yourself as Boulder Opal's quantum control capabilities are constantly improving.

In this performance briefing, benchmark tests were run across two key phases in quantum control development—simulation and optimization. These phases allow for more rapid design before a physical experiments are performed.

Simulations recreate a quantum system (hardware, software, controls, etc.) in a classical computer by solving the equations that dictate its dynamics. To evaluate simulation performance, benchmark tests were conducted across several system examples, with varying degrees of complexity. After a simulation is dialled in, quantum engineers may want to optimize certain parts of their design. For instance, the control signals being sent to the quantum device.

Metrics

The key to simulation and optimization solutions is speed and accuracy.

- Speed: The time it takes for a calculation task to complete. Depending on the complexity of the task and the compute resources available, this could be measured, in seconds, minutes, hours, or even days.

- Accuracy: The effectiveness of a simulation or optimization task to replicate the real word and generate results that will match a physical system. As exactly simulating the dynamics of a physical quantum system is very compute intensive, different simulation methods use different approximations to the quantum equations, which lead to different magnitudes of errors.

There is an inherent tradeoff between these two metrics. The faster a simulation or optimization task can be completed, the faster a quantum engineering team can move in their design process. However, in order to be more accurate, these solutions will likely need to be more complex, therefore increasing the time it takes to be completed. In the end, the key goal of quantum engineering teams is to advance quantum computing technologies, as fast as possible, and within a certain budget. When evaluating simulation and optimization solutions, users should consider how well the solution can help them achieve their target end goal.

In this series of tests, we focused specifically on speed—the runtime needed to complete various tasks.

Configurations

In these benchmark tests, popular industry solutions were used to evaluate performance metrics across the targeted simulation and optimization tasks.

- Boulder Opal: The hardware design, AI-based automation, and quantum control optimization toolkit from Q-CTRL.

- QuTiP 4.7.1: An open source, quantum tool box in Python.

- C3 1.4: An open source toolset for control, calibration, and characterization.

In the first phase, all solutions were run locally on personal computer hardware. This was to allow for like-to-like performance comparisons between the solutions, isolating the software differences. The relevant personal computing configuration details are shown with each test evaluation below.

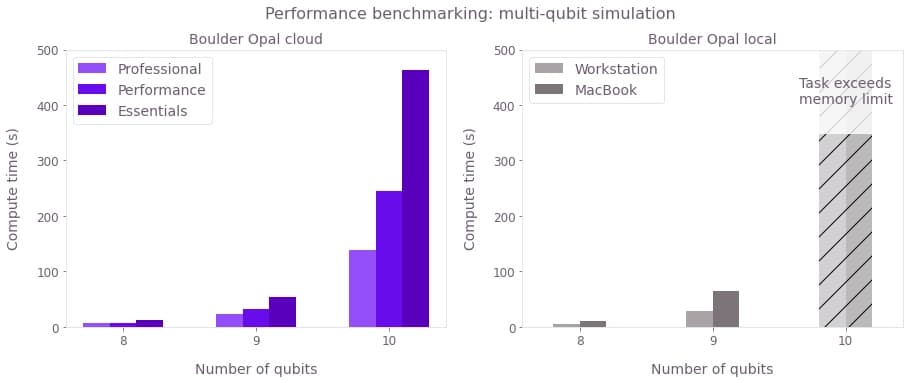

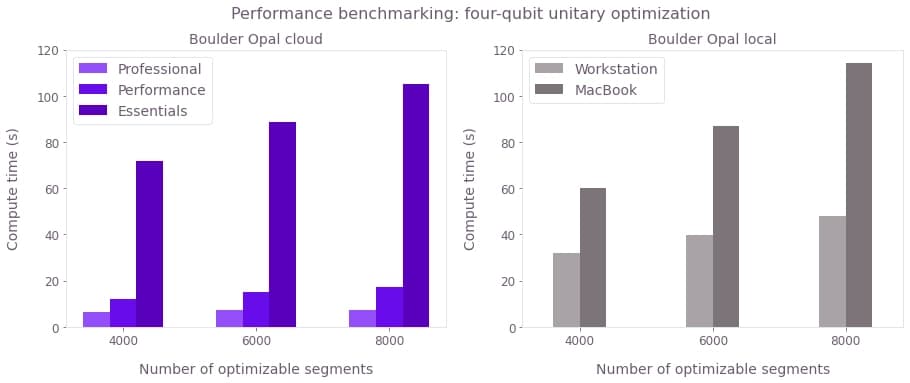

However, Boulder Opal also has several cloud acceleration options, allowing for even better performance. In those cases, additional Boulder Opal data is provided to show additional speed up. Boulder Opal Cloud Accelerated testing occurred on three environments:

- Boulder Opal Essentials: 8 vCPU, 64 GB RAM machine, 1 machine

- Boulder Opal Performance: 16 vCPU, 128 GB RAM machine, 4 machines

- Boulder Opal Professional: 32 vCPU, 256 GB RAM machine, 16 machines

Refer to this topic for tips on how to run parallel tasks if your plan supports concurrent calculations.

Cloud acceleration

The different Boulder Opal cloud plans can provide performance improvements for many compute intensive tasks. This improvement will depend on a variety of factors within the workload, but generic benchmarks can give a rough frame of reference for the expected performance boost across similar simulation and optimization workloads. You can often see that the Essentials plan performs worse than the local execution since the compute resource used is less than the laptops used.

Simulation

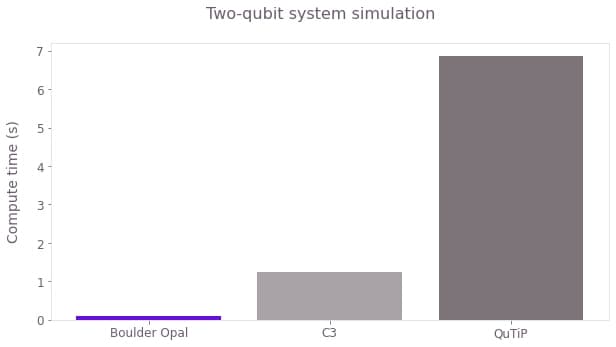

Two-qubit system

The most fundamental simulation activity is to replicate smaller quantum systems of just a few qubits. While these tasks do not take too much time individually, they are often run repetitively to test different variables in the system. In this case, two qubit simulations were performed, showing significant speed up with Boulder Opal with 76.1× and 13.6× improvements over QuTiP and C3, respectively.

While saving 1 to 7 seconds on a given task may not be impactful, the benefits of a faster simulation package become evident when assessing simulations with varying inputs. Performing parameter sweeping over multiple values, these small gains could add up to large calculation times, thereby improving the efficiency of the experiment and moving faster to make impactful discoveries.

To better see the performance benefits of Boulder Opal, more complex (and useful) simulations can be used, for example in spin chain and EIT cooling simulations.

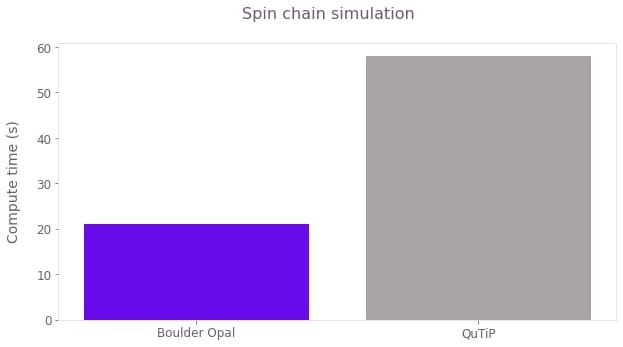

Spin chain

Spin qubits can be modelled as a series of connected qubits, or a chain. As the number of qubits increases, the complexity of the simulation increases exponentially. In a 10 qubit simulation, Boulder Opal is 2.7× quicker than QuTiP.

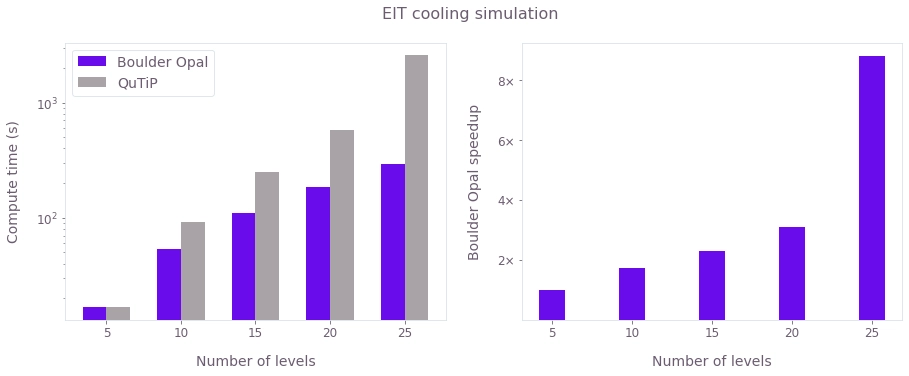

EIT cooling

Electromagnetically-induced-transparency (EIT) cooling is a method within trapped ion quantum computing hardware to simultaneously cool the entire array to the ground state. These effects are simulated with a 3-level ion coupled to its quantized motional mode represented by a maximum of $N$ levels.

As $N$ increases, that directly correlates to an increase in the complexity of the system and the corresponding simulation. For small system sizes, QuTiP and Boulder Opal have similar performance. But, as the complexity increases, QuTiP sees more significant increases in simulation time. Boulder Opal is able to scale more constantly and predictably, where at 25 levels it becomes over 8× faster.

Optimization

After simulations are validated, quantum engineers can begin the real work of optimizing and improving those systems for peak performance. For example, they will want to find the ideal pulses for maximum gate fidelities. These optimizations are critical to the stability and overall performance of the quantum computing system.

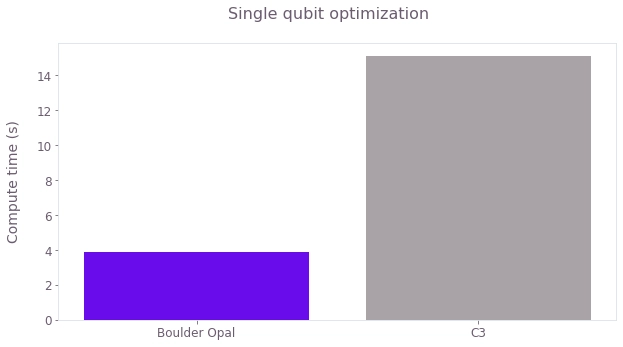

Single qubit system

Starting with just a single qubit, users can optimize the most basic unit of the quantum computer. Boulder Opal is 3.95× faster in this scenario.

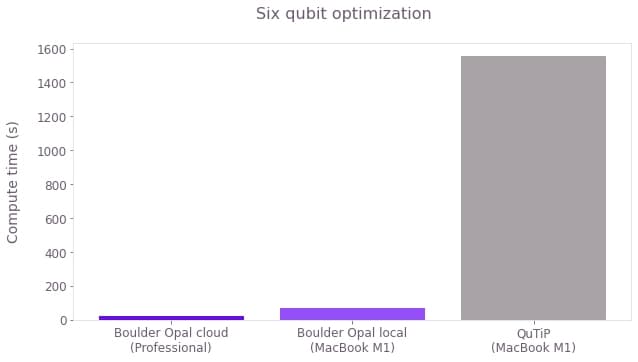

Six qubit system

The number of qubits can be further increased to increase the complexity of the optimization. Again, Boulder Opal improvements are even more evident for these compute intensive workloads, outperforming QuTiP by 91×.

Conclusions

After virtual systems are optimized to the best of their abilities, quantum engineers must transition to real hardware. This poses a new set of challenges still relevant to Boulder Opal optimization routines. Further performance benchmarks will be run as real hardware becomes more readily available.

In the meantime, a few Q-CTRL real world use-cases can demonstrate the Boulder Opal advantage. For more information, read our case studies with Nord Quantique and Northwestern University.